ChatGPTand similar new models like Stable Diffusion have absolutely amazing capability and potential. However, how useful are they in daily life? Clearly, for ideation, completion and playing around they are great and many examples exist. But with Microsoft integrating ChatGPT in Bing and Google scrambling to finish their Bart, the question rises “Why?”

To me, that does not make sense as these models are not created with that goal in mind. Many searches on Google are for information or for a source that can give you the information you need. I argue that these models do not know enough about the world to be useful replacements for search engines. Enough has been said about the dangers of ChatGPT’s lack of citation and its certainty of being right when it is wrong. I argue that we should not use such models in this literal manner. Arguing is one thing, demonstrating is better so… Let me demonstrate in a manner that hopefully shows you why asking literal or real-world things is not the right way of using these cool new tools.

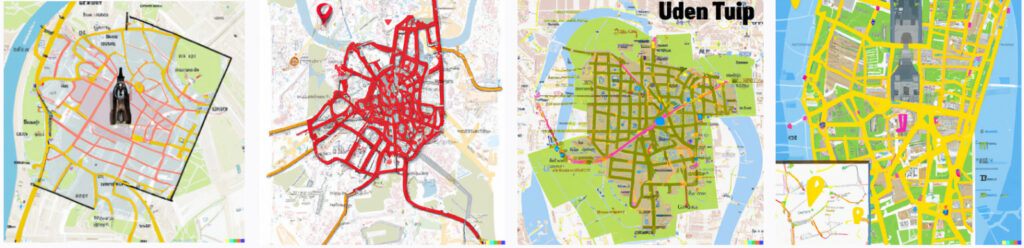

Imagine I want to navigate Utrecht (a moderate-sized city in the Netherlands). I want to go from Utrecht Central Station to the Dom tower in the historic centre. First, let me ask one of the largest image generation models for a map, using the prompt:

“A realistic map that is suitable for navigation of the city center of Utrecht in the Netherlands”

The result is somewhat similar to how I know Utrecht looks, with canals, concentric rings of road and highways connecting to the outside. It might be my age or having grown unaccustomed to map reading, but it will be a challenge to find my way with this map. And let’s pretend we are not further confused by the Ciuh Cleck title of the map and assume that it AI-ish for Utrecht.

The result is somewhat similar to how I know Utrecht looks, with canals, concentric rings of road and highways connecting to the outside. It might be my age or having grown unaccustomed to map reading, but it will be a challenge to find my way with this map. And let’s pretend we are not further confused by the Ciuh Cleck title of the map and assume that it AI-ish for Utrecht.

Let’s try again, this time asking for some sort of walking route indicated on the map:

“A realistic map that is suitable for navigation of the innercity center of Utrecht in the Netherlands, highlight the route from Utrecht Central Station to De Dom tower”

Again, not really helpful. But at least I now somewhat recognize the Dom tower on the left one… wait no, the Dom looks different.

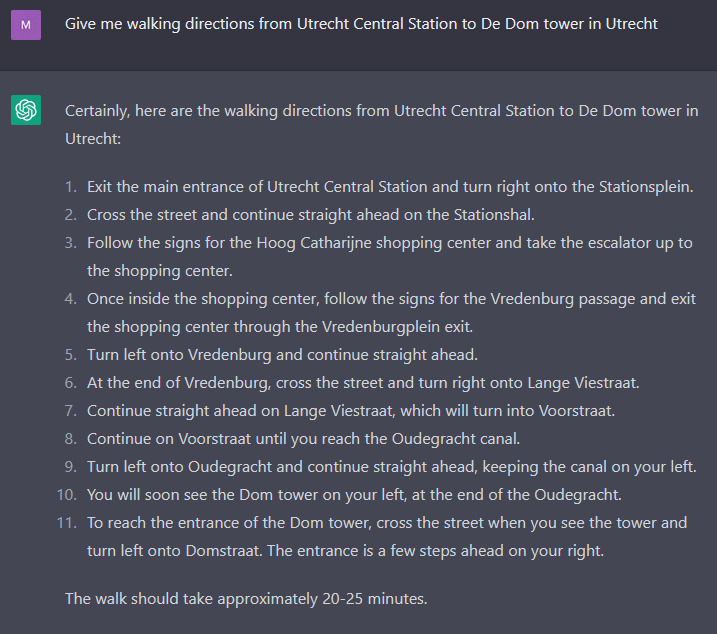

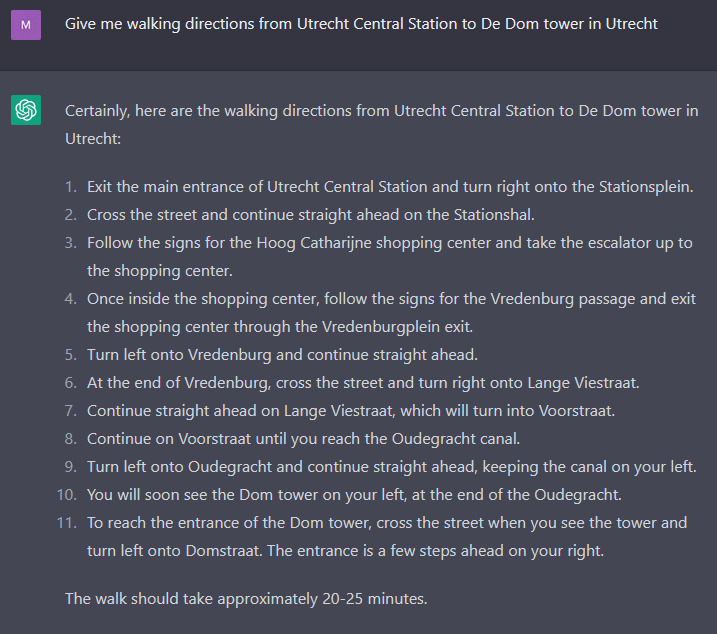

OK, let’s move to ChatGPT (finally). Perhaps it can give me some walking instructions. Prompting:

“Give me walking directions from Utrecht Central Station to De Dom tower in Utrecht” does give a very reasonable sounding list of directions.

I am impressed! At first glance, the directions seem reasonable, but the proof is in the pudding. Let’s try walking these instructions.

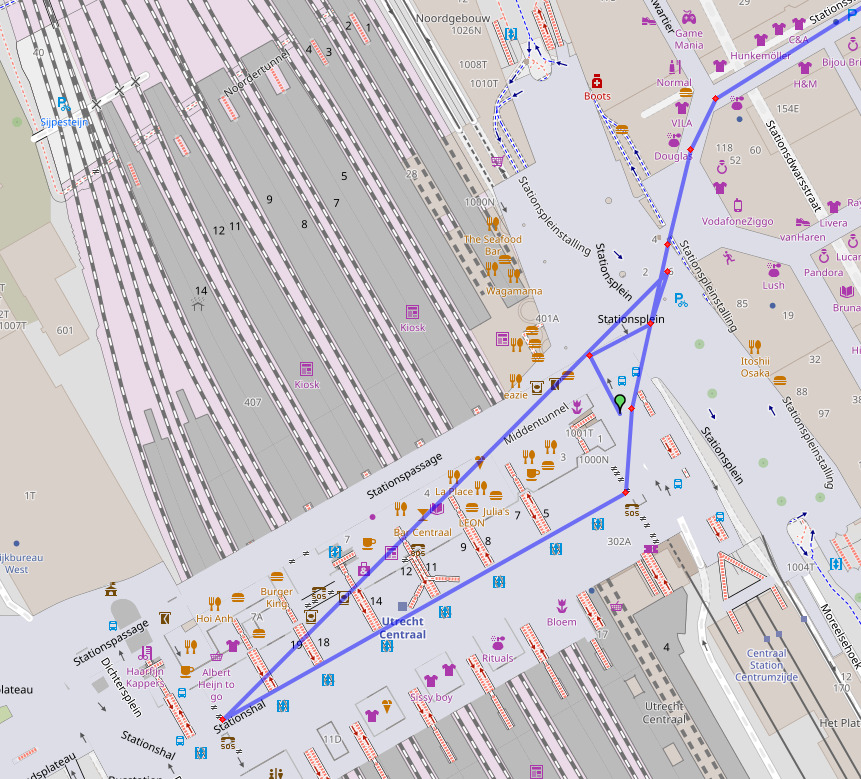

- Exit the main entrance of Utrecht Central Station and turn right onto the Stationsplein.

- Cross the street and continue straight ahead on the Stationshal.

- Follow the signs for the Hoog Catharijne shopping center and take the escalator up to the shopping center.

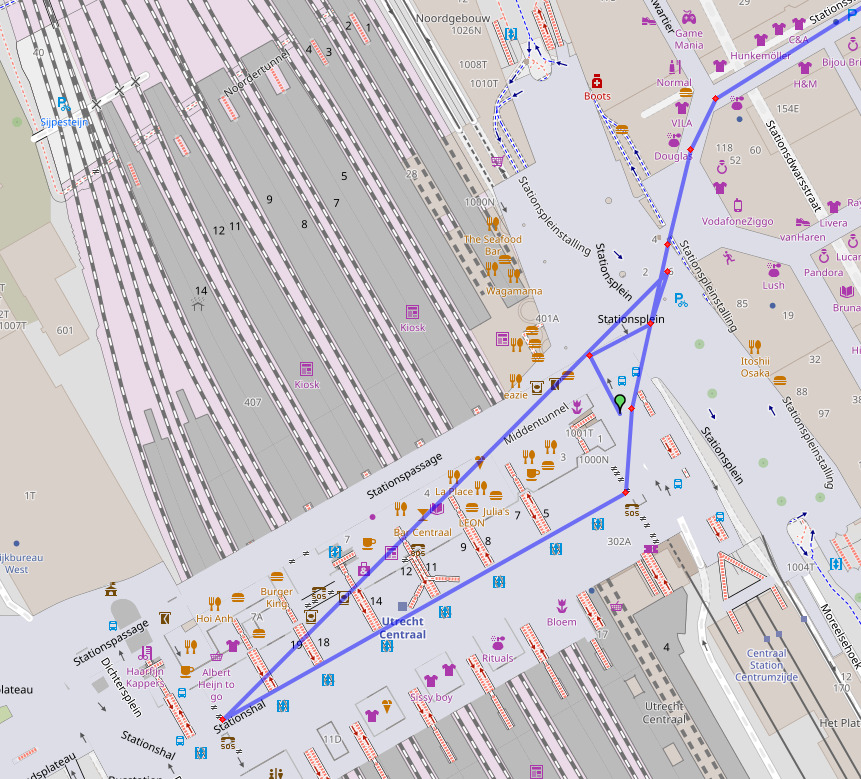

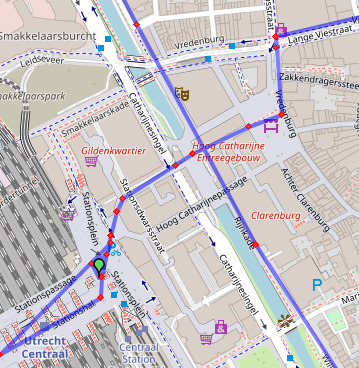

Until step three it is going well, although I was a bit confused about how to exit the train station and… there is no escalator up to the shopping centre, but hey… you don’t see that on a map:

Keep going, we are doing well!

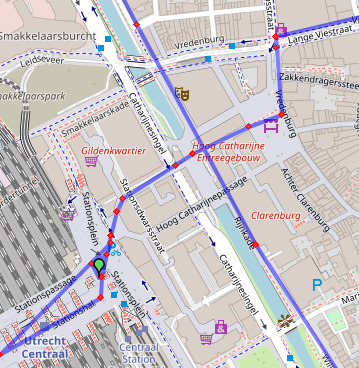

- Once inside the shopping center, follow the signs for the Vredenburg passage and exit the shopping center through the Vredenburgplein exit.

- Turn left onto Vredenburg and continue straight ahead.

- At the end of Vredenburg, cross the street and turn right onto Lange Viestraat.

OK, knowing my way around Utrecht I would have turned right on Vredenburg… and most tourists would too as you can pretty much see the Dom tower from there. But perhaps ChatGPT is on to something (a nice tour of the city perhaps)… or maybe not (as you can already see we will be here again). Carrying on:

- Continue straight ahead on Lange Viestraat, which will turn into Voorstraat.

- Continue on Voorstraat until you reach the Oudegracht canal.

- Turn left onto Oudegracht and continue straight ahead, keeping the canal on your left.

- You will soon see the Dom tower on your left, at the end of the Oudegracht.

- To reach the entrance of the Dom tower, cross the street when you see the tower and turn left onto Domstraat. The entrance is a few steps ahead on your right.

Wow! Eventually, you do get to the Dom tower (with some very liberal interpretation of step 9’s “keep the canal on your left”). The walk will take you considerably longer than the advertised 20-25 minutes though. I am impressed that it got me to my destination, but for now, I will stick to Google or paper maps.

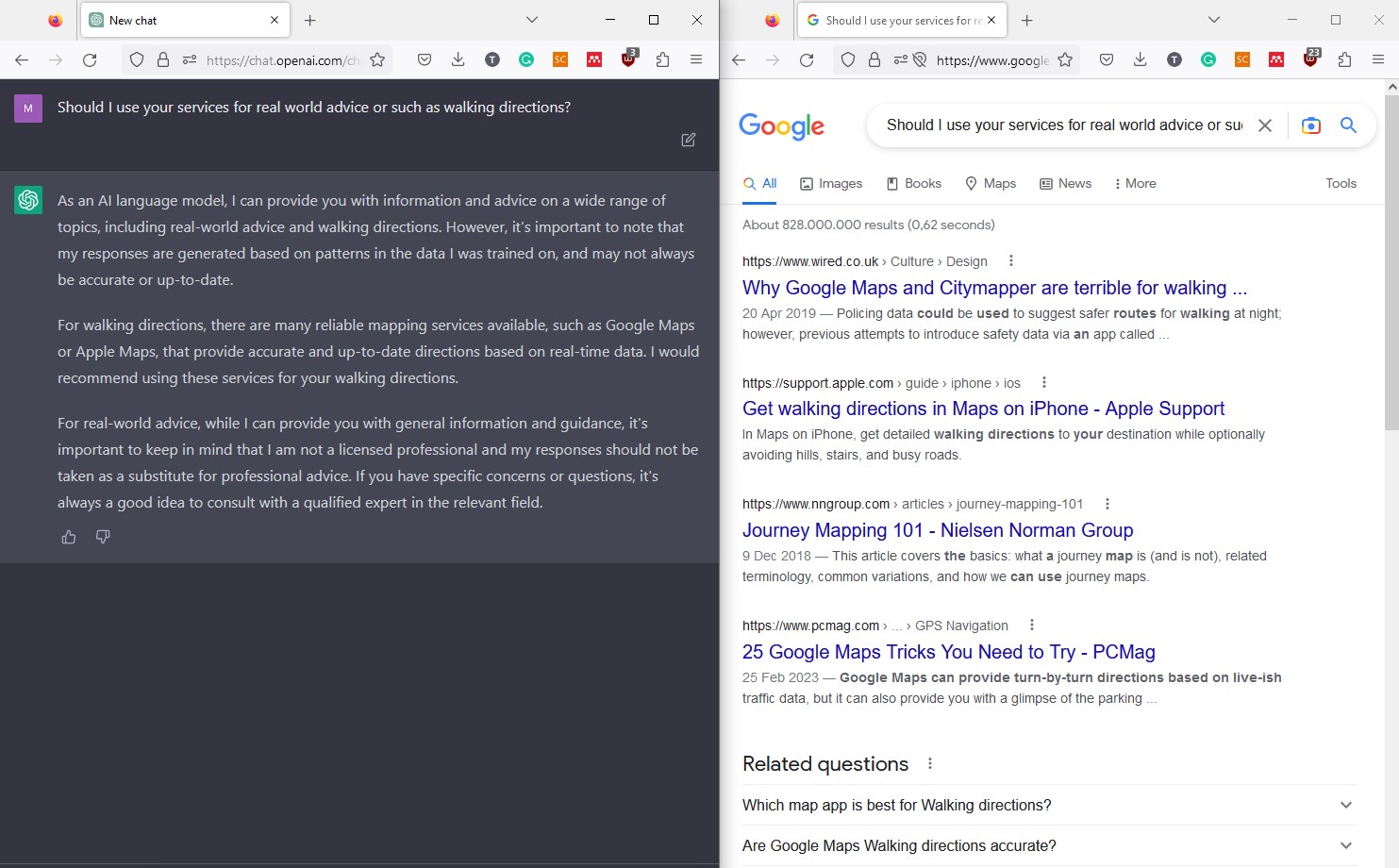

This demonstration hopefully shows that amazing models such as ChatGPT are created with a specific task in mind and that outside of this task cannot perform well. I think the fact that it can still perform tasks outside the domain of “guess the next word” is absolutely amazing and I can see the appeal of trying what it can and cannot do, as evidenced by this blog post. However, it is something else to put in the place of a search engine where people have grown accustomed to finding (mostly) correct information. Google and other search engines do not know all, but clicking on a link in the search results brings you to a website where you can somewhat establish the credibility of the information. With generative models like ChatGPT this is considerably harder. Does that mean we should not use this technology at all? Let’s ask ChatGPT and Google… I leave the trial walking up to you:

The result is somewhat similar to how I know Utrecht looks, with canals, concentric rings of road and highways connecting to the outside. It might be my age or having grown unaccustomed to map reading, but it will be a challenge to find my way with this map. And let’s pretend we are not further confused by the Ciuh Cleck title of the map and assume that it AI-ish for Utrecht.

The result is somewhat similar to how I know Utrecht looks, with canals, concentric rings of road and highways connecting to the outside. It might be my age or having grown unaccustomed to map reading, but it will be a challenge to find my way with this map. And let’s pretend we are not further confused by the Ciuh Cleck title of the map and assume that it AI-ish for Utrecht.

We\Visit makes virtual hospital visits possible for families and patients when hospital access is limited. In the ad-hoc corona project, TU Delft engineers, designers and psychologists are developing an online communication platform that connects families with their loved ones in difficult times. With We\Visit remote hospital visits are made possible by scheduling video calls when patients or family members are in isolation or when visits to the care facility are limited and even cancelled.

We\Visit makes virtual hospital visits possible for families and patients when hospital access is limited. In the ad-hoc corona project, TU Delft engineers, designers and psychologists are developing an online communication platform that connects families with their loved ones in difficult times. With We\Visit remote hospital visits are made possible by scheduling video calls when patients or family members are in isolation or when visits to the care facility are limited and even cancelled. “Visiting a loved one who’s critically ill: normally that’s possible, but due to the coronavirus, visiting ICUs is no longer permitted. The result is a lonely fight behind closed doors. In an attempt to combat the loneliness, researchers at Delft University of Technology have come up with We\Visit: a tool that facilitates video-calling by appointment. And it was developed at lightning speed after the Reinier de Graaf hospital sounded the alarm.”

“Visiting a loved one who’s critically ill: normally that’s possible, but due to the coronavirus, visiting ICUs is no longer permitted. The result is a lonely fight behind closed doors. In an attempt to combat the loneliness, researchers at Delft University of Technology have come up with We\Visit: a tool that facilitates video-calling by appointment. And it was developed at lightning speed after the Reinier de Graaf hospital sounded the alarm.” Wow, I got a position at the Delft University of Technology at the

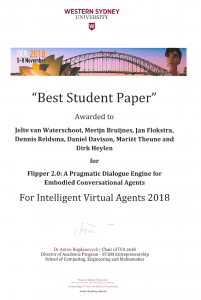

Wow, I got a position at the Delft University of Technology at the  Sydney Australia is about as far away from home as possible. The trip to attend the IVA2018 conference in Sydney was long. So why not stay for a little while to make some new (science) friends? “I was here anyway”, I told Anton Bogdanovych who was kind enough to host me at the Western Sydney University.

Sydney Australia is about as far away from home as possible. The trip to attend the IVA2018 conference in Sydney was long. So why not stay for a little while to make some new (science) friends? “I was here anyway”, I told Anton Bogdanovych who was kind enough to host me at the Western Sydney University. Flipper 2.0 aims to help developers of embodied conversational agents (ECAs) quickly and flexibly create dialogues. Jelte van Waterschoot presented our

Flipper 2.0 aims to help developers of embodied conversational agents (ECAs) quickly and flexibly create dialogues. Jelte van Waterschoot presented our  During the conference, I organised a

During the conference, I organised a  Visiting European research project partners is great: seeing the places, trying the food, meeting the people. It is only fair to return the favour from time to time. In the Council of Coaches project many partners are from Twente, so it makes a lot of sense (less people traveling) to have meetings in Twente. We were the hosts for two technical integration meetings, April 23-26 and October 8-12. We had guests from the University of Dundee in the UK, the Sorbonne University in France, the Polytechnic University Valencia in Spain, and the Danish Board of Technology Foundation.

Visiting European research project partners is great: seeing the places, trying the food, meeting the people. It is only fair to return the favour from time to time. In the Council of Coaches project many partners are from Twente, so it makes a lot of sense (less people traveling) to have meetings in Twente. We were the hosts for two technical integration meetings, April 23-26 and October 8-12. We had guests from the University of Dundee in the UK, the Sorbonne University in France, the Polytechnic University Valencia in Spain, and the Danish Board of Technology Foundation.